Whether you're planning on getting into industry or whether you're planning on staying in academia I would strongly recommend reading around and familiarising yourself with what is now considered "old school". If I can get ~0.93 micro F1 on a text classification problem using bag-of-words features and a logistic regression model that will happily chug through 100k inferences/min on a $25/month virtual server, it is unlikely my customer will want to pay $500/month for the same throughput and 0.96 micro F1 using a fine-tuned huggingface BERTForClassification model. Regarding transformers and older methods "no longer" being useful: whilst some companies in industry (typically the well funded incumbents like FAANG and unicorns) are obsessed with transformers, the rest of the industry is decidedly /NOT/ blinded by the transformers trend.Īt my company the philosophy is to start with simple models and move towards more complex modelling approaches only if you have to.

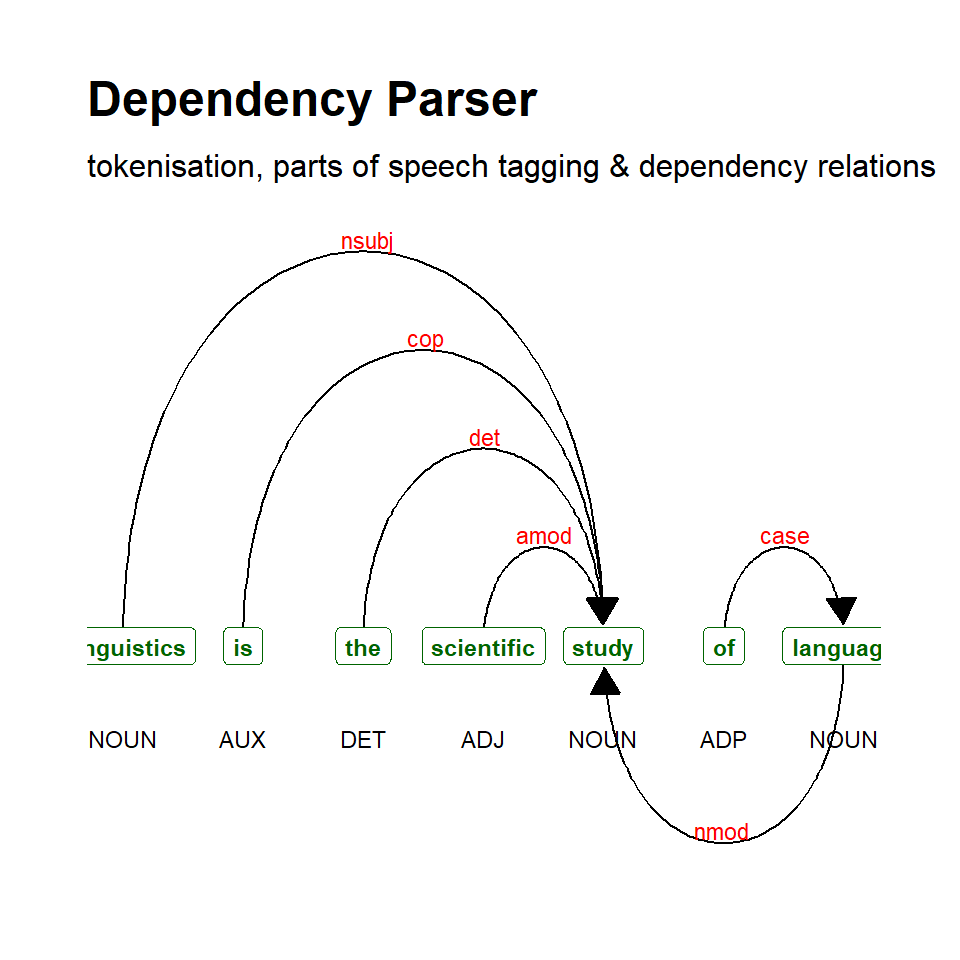

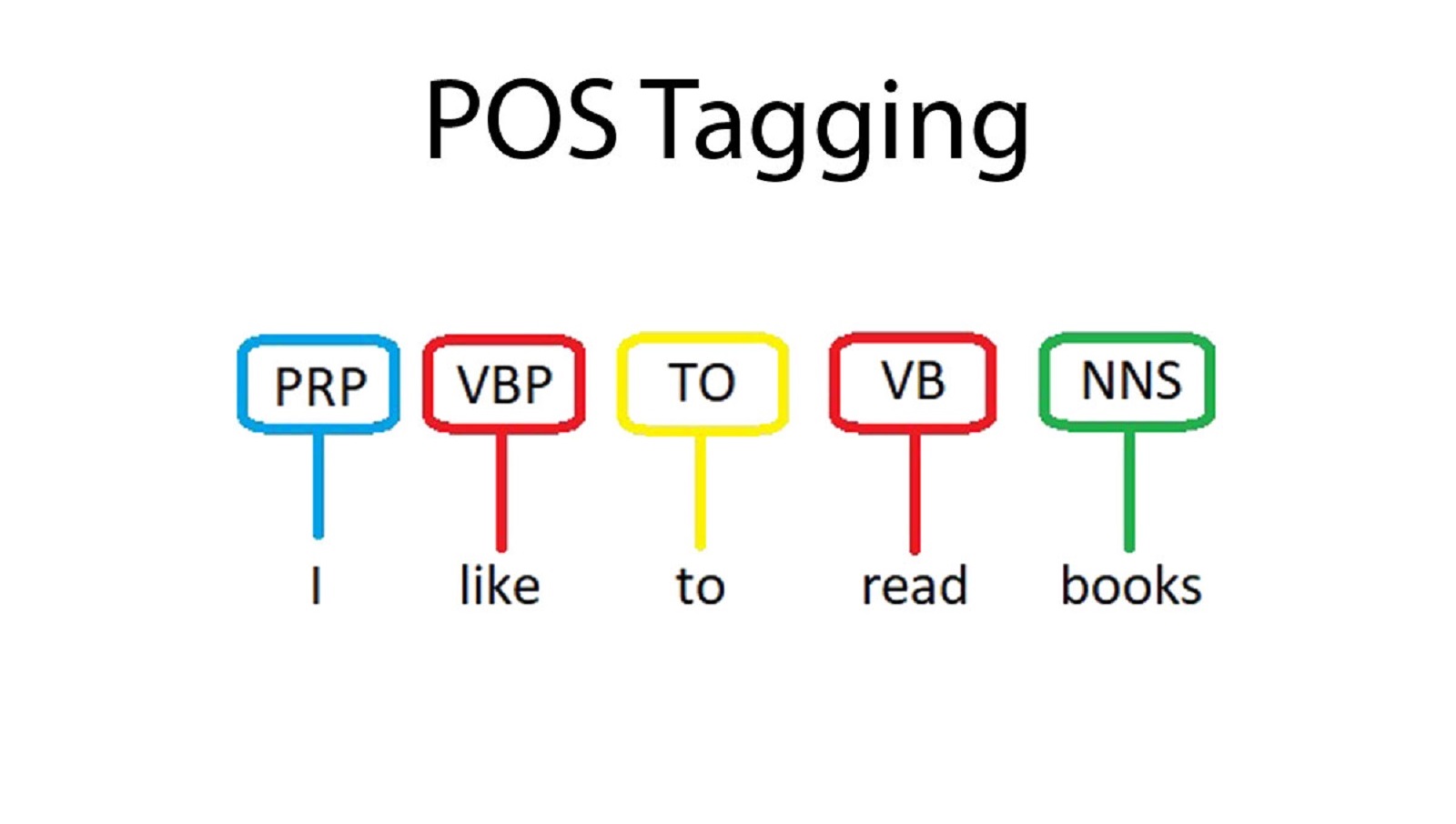

Both NER and POS are useful upstream tasks that help with co-reference resolution and entity linking. Likewise POS tagging for identifying verb chunks and noun chunks for the purpose of metadata enrichment or to improve document retrieval is quite common.

NER - perhaps in combination with some form of co-reference resolution can be useful in and of itself for some use cases: for example clients might want to group/filter documents by which people and organisations are mentioned most within them. In industry we're mostly pragmatic engineers who aren't aiming for SOTA but "whatever works and is cheapest".

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed